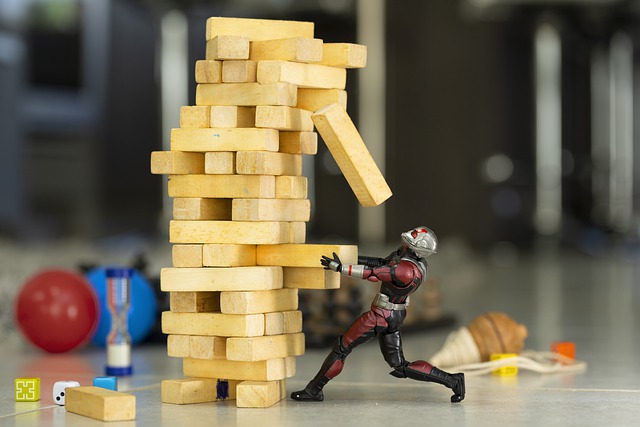

Blitzscaling

Tips and tricks gained from experience to scale your tech stack really, really fast.

Your company has planned a massive marketing campaign. Millions of households are going to view the company’s advertisement during a prime time television show. An enormous number of users are expected to try out your company’s application. You, the engineer, has been given a couple of weeks to scale the tech stack to handle this massive traffic surge.

What do you do?

I am assuming that you are using a public cloud. If not, good luck(use this opportunity to make a case to your decision-makers to move to the cloud).

Preliminary

Get a rough sense of the traffic surge—take help from your marketing team for a guesstimate. Use this number as the anchor in all the subsequent steps.

Horizontal scaling is the mantra

All cloud providers have some form of autoscaling—on-demand addition of servers based on specific conditions. Utilize this to the hilt. Figure out the thresholds for autoscaling. There will be a lag between the autoscaling condition being met and the addition of the server—plan for margin of safety. Lean on the side of over-provisioning than under-provisioning when the entire world’s eye is on you.

If you have sticky sessions or local server persistence that span multiple requests, now is the time to refactor your application to be stateless—on a war footing.

Make scaling someone else’s problem

CDN is a one-word answer to all scaling problems. Wherever possible, offload traffic to a CDN. One successful result from the CDN is one less hit to your backend.

Like CDN, many other frameworks help you scale seamlessly—Firebase, DynamoDB, etc. Leverage these to the maximum.

Cache, cache, cache, and cache some more

When one thinks of a cache, Redis or Memcache come to mind—do not restrict yourself narrowly to these.

Visualize your application in layers and see what can be cached(sometimes implicitly) at each of those layers—client, proxy servers, load balancer, web server, application server, middleware, database, and the operating system.

HTTP has many ways of caching—cache headers and ETags. Utilize all these to the hilt. One less hit to your backend is one less scaling woe.

If you are not using connection and object pooling in your application, now is the time to look into those— creating and closing connections(and objects) can get expensive, especially under heavy load.

Know your limits

If you are doing this exercise for the first time, your stack—server, libraries, VM, OS, and dependent components like databases, caches, etc.—are probably running with defaults that came out of the box.

Identify all the parameters(configurations) that matter and tune them—connection limits, file descriptor(IO) limits, thread counts, timeouts, pool, and memory settings.

Trace the critical paths in your application and gauge third-party dependencies(external services from other vendors) on these paths. Talk to these third parties and make sure they are ready to handle the extra load coming from you—do not let them be the weakest link in the chain.

Talk to your cloud provider(s) and ask them to increase the limits. All cloud providers have soft limits on resources. Check with your account manager and request a limit increase.

The chink in your scaling armor

The database is going to be the chink in your scaling armor. Use the traffic guesstimate you did to make sure that your database will hold up. Know the limits of your database(previous section) and tune for performance. Simultaneously, do not underestimate modern-day databases and servers. They can handle a ridiculous amount of load as long as you are not doing something stupid(if you are a hyper-growth startup, you probably are doing multiple stupid things; time to fix those).

If you believe your database will not be able to service the load, cache, cache, and then cache some more. If you have write-heavy traffic, then pray to God. Or, use a queue( async processing) and/or figure out a way to delay and spread the writes(write-back cache).

The ever seeing eye

Have observability in place. Murphy’s law states that whatever can go wrong will go wrong. When this happens during stressful times like a high decibel marketing campaign, and you are running blind to what is happening, cortisol levels go through the roof and tear you to pieces. Observability is the calming friend you lean on during these pressing times.

Conclusion

Have a checklist of all these, and make sure you tick them off as and when you close them. Having a list is critical as these can be stressful times, and losing track of what needs to be done, what has been done, and what is pending is easy.

You might be wondering why I did not ask you to do a performance test.

Doing a performance test and figuring out all the bottlenecks would be the theoretical(and systematic) way to go about this. The problem with performance testing is that carrying out one is challenging and requires expertise and experience. Also, mimicking real-world traffic and user behavior through a performance test is tough. If you know how to do that, you might as well know all the scaling tricks that I mentioned here(you are not the audience for this post).

If it is not obvious, I am borrowing the term Blitzscaling coined by Reid Hoffman.

Image by Johnny Gutierrez from Pixabay

Subscribe to get new posts by email